Why Some AI Models Are Harder to Influence Than Others

Topic: Reporting

Published:

Written by: Clearscope

Trying to “influence AI” can feel like trying to influence the weather.

You publish content. You optimize it. You wait. Sometimes your brand shows up in AI answers. Sometimes it doesn’t. It feels random, but it's not random at all. And it’s definitely not the same across models. Two AI systems can answer the exact same prompt and look nearly identical on the surface. But underneath, they may be operating in completely different ways.

And that difference is what determines influence. It’s not just about what a model knows. It’s about how it gathers information before it responds.

The reality: The way a system retrieves information shapes who gets seen.

Influence Begins With (And Depends on) Retrieval Behavior

Search behavior determines how open a system is to new information: Some models search the web before almost every answer. Others mostly rely on what they already know and only search when they think it’s necessary.

That one difference determines how often new content has the chance to shape what the AI says.

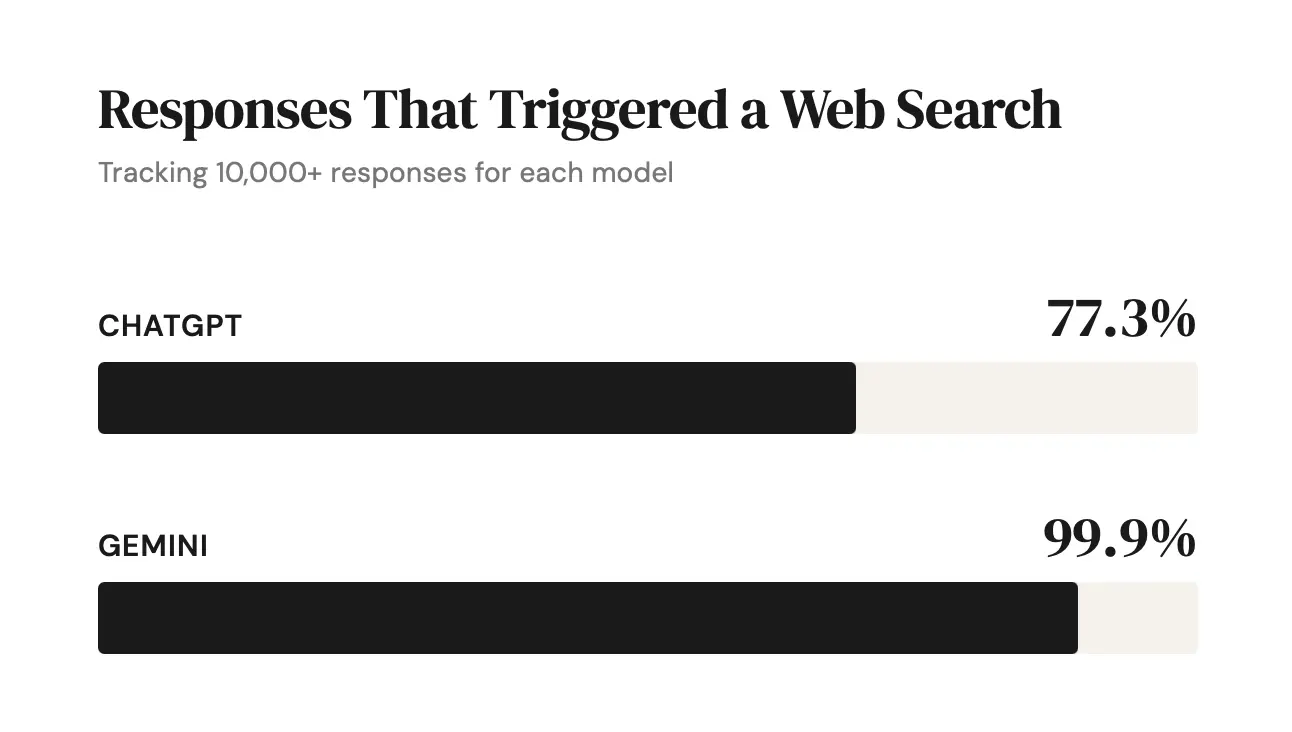

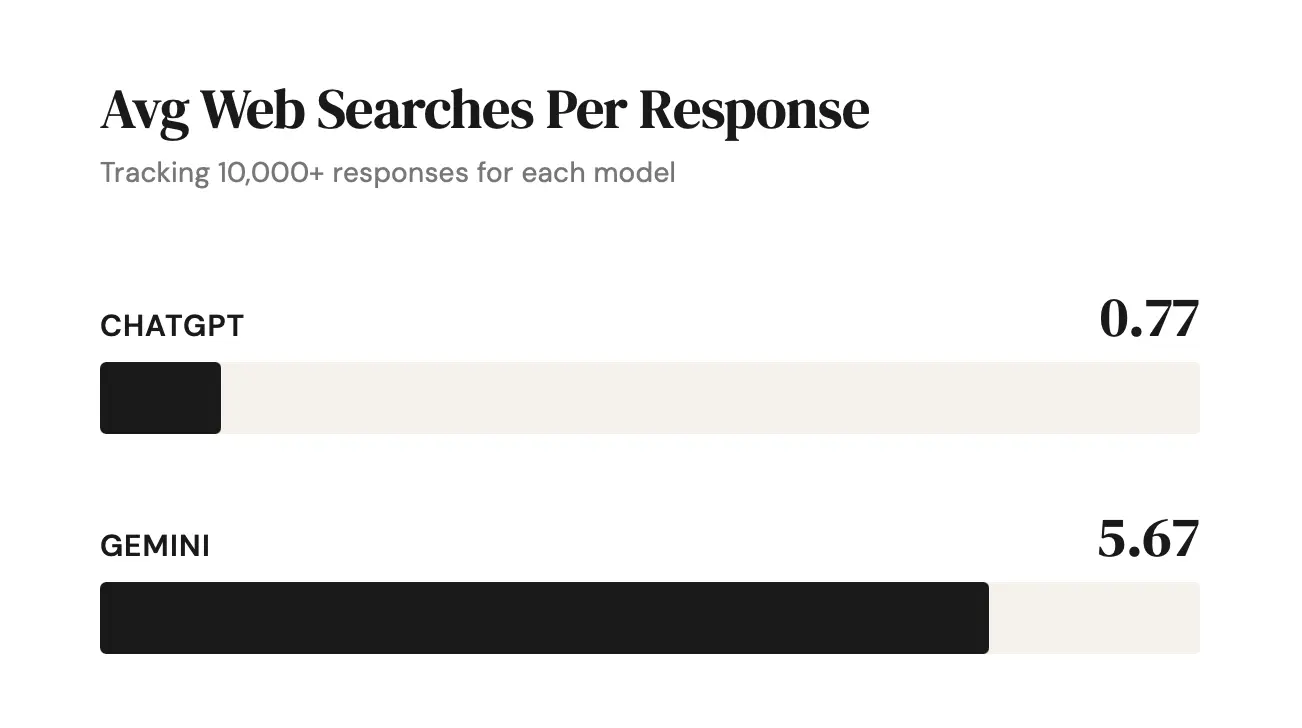

When we analyzed thousands of AI responses, a clear pattern emerged. Gemini initiated web searches before nearly every answer. GPT searched less consistently and typically ran fewer web searches per response.

The difference isn’t whether they search at all. Both do. The difference is how central and extensive search is in the answer-building process.

That difference doesn’t just affect how answers are built. It affects how accessible each system is to new voices.

When a model searches before responding, the web becomes part of the answer-building process. The AI pulls in what’s discoverable at that moment, synthesizes it, and constructs a response based on what it finds.

In that environment, visibility is fluid. Content can enter or exit the answer set depending on how well it aligns with the kinds of searches the model runs. If your page is indexed, relevant, and aligned with those queries, it has repeated opportunities to surface. The evidence the model draws from isn’t fixed. It’s recalculated every time it searches.

As the model experiments with different query variations, new entry points open up. Visibility can expand as the model’s search behavior expands.

But when a model searches selectively, the dynamic shifts. Answers are generated more often from internal knowledge, with search used only occasionally. In that case, it’s harder to break in quickly. Visibility depends less on real-time discoverability and more on whether your brand, concept, or source is already reinforced across the broader web ecosystem.

Influence builds more gradually. The competitive frame is more stable. The practical difference comes down to permeability.

In a search-first system, influence is spread across live, retrievable content. In a recall-first system, influence is concentrated inside a relatively stable internal knowledge structure.

Both models can reference external sources. But the more frequently a model searches — and the more it varies those searches — the more opportunity exists for new content to shape the outcome.

That’s the real strategic difference.

Opportunity Expands When Search Expands

At first glance, AI answers can feel unpredictable. Sources shift. Citations rotate. A brand that appeared yesterday may not appear today.

It’s easy to interpret that movement as randomness. But volatility doesn’t mean chaos.

When you look at patterns over time, structure emerges. The same clusters of queries tend to repeat. The same groups of brands and domains resurface. Even search-heavy models operate within boundaries. They don’t pull evenly from the entire internet. They repeatedly draw from a more limited, recurring set of sources.

That’s why visibility isn’t about showing up once. It’s about becoming part of that recurring set. When a source enters that rotation, influence builds over time. When it doesn’t, volatility simply makes the absence visible.

At the same time, something else is happening.

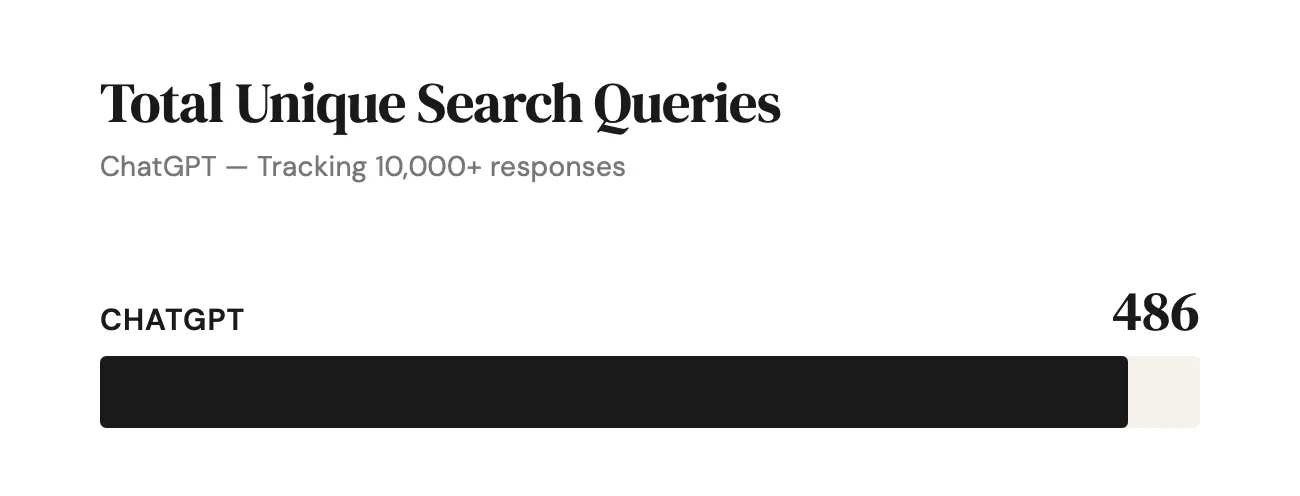

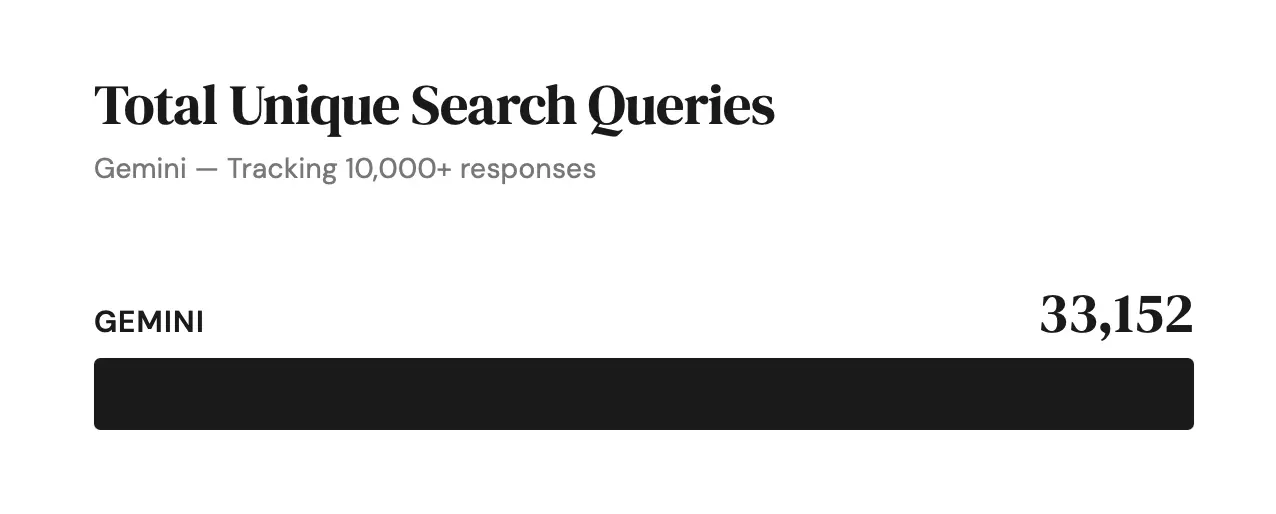

Search-driven models are generating more varied and more specific queries. Instead of staying at broad, high-level topics, they move deeper into detailed, niche questions — what we call the long long tail.

In search-heavy systems, this creates expanding opportunity:

As query variation increases, new entry points emerge

Each additional query is another chance to surface

Discoverability grows as the model broadens the range of questions it asks

In recall-heavy systems, the feedback loop is slower:

The model recalibrates less frequently through fresh searches

Changes on the web take longer to influence answers

Competition remains comparatively stable

The difference is straightforward. Systems that constantly recalculate through search create faster-moving opportunity. Systems that rely more on internal knowledge evolve more gradually.

And that determines how quickly new content can shape AI outputs.

Why GPT Is Harder to Influence

GPT searches less frequently and relies more on internal knowledge. That makes its competitive environment more stable — and harder to break into.

Influence depends less on real-time discoverability and more on long-term representation. You’re not just competing in search results; you’re competing for presence inside a relatively fixed internal knowledge structure.

Visibility builds gradually. Influence accumulates through reinforcement, not rapid entry.

Why Gemini Creates More Opportunity

Gemini searches frequently and varies its queries. Each search reopens the field.

That creates more recurring opportunities to surface.

Visibility still isn’t random — the model operates within patterns — but live web content directly shapes answers. Strong discoverability and comprehensive coverage can influence outputs more quickly.

In simple terms:

GPT leans more heavily on long-term authority embedded in its internal knowledge.

Gemini still rewards authority, but because it searches more frequently, discoverability plays a larger role in shaping outcomes.

And that difference changes strategy.

AI Visibility Is Model-Specific

Here’s the core takeaway: there is no single “AI visibility” strategy.

Influence varies by model because retrieval behavior varies by model.

In search-first systems like Gemini, optimization looks a lot like search. You need to understand the kinds of queries the model runs, ensure your content is discoverable, and align with the topics and angles it repeatedly explores.

In recall-first systems like GPT, optimization looks more like positioning. Inclusion depends less on short-term search performance and more on whether your brand or ideas are consistently represented across the broader web ecosystem.

The difference comes down to one question:

Does the system search before it speaks?

If it does, opportunity lives in discoverability.

If it doesn’t, opportunity lives in long-term reinforcement.

Once you understand that, the black box becomes far less mysterious. You stop trying to influence “AI” as a single thing and start focusing on how each model actually builds its answers.

The 2026 SEO Playbook: How AI Is Reshaping Search

Learn how generative engines, AI answers, and conversational interfaces are reshaping SEO in 2026, and what brands must do to stay visible across both retrieval and reasoning systems.

Read moreAI and Personalization: Essential for Brand Visibility

Answering a customer’s highly specific, personal question doesn't merely make you visible; it positions you as the singular, relevant authority in that precise moment.

Read moreAI in SEO: What You Need to Know for 2026

Learn how intelligent systems are reshaping search, content, and strategy, and what you need to know heading into 2026.

Read more