We're Running an AEO Experiment on Ourselves — And Documenting Everything

Topic: AEO

Published:

Written by: Clearscope

We talk a lot about optimizing for AI search. But we wanted to go further than theory.

So we designed a real experiment to test whether a repeatable content process can actually move the needle on brand mentions inside AI-generated answers. We're using our own product to run it. We're documenting every decision, every data point, and every result…including the ones that don't go as planned.

We're calling it the AEO Tells All experiment. Here's exactly what we're doing and why.

The Problem We're Trying to Solve

Most brands have no idea whether they're being mentioned — or ignored — by ChatGPT, Gemini, and Perplexity when users ask questions in their category. There's no rank tracker for AI-generated answers. No impression data. No notification when a competitor gets recommended instead of you.

The result is that most content teams are investing in AEO based on theory, not measurement. They're publishing content, optimizing for AI search, and hoping it's working — with no reliable way to know whether it is.

We wanted to change that. Specifically, we wanted to answer a question that nobody in the industry has documented with real data yet:

If you identify the specific web searches that AI platforms use to construct their answers, and publish content that directly targets those searches, does your brand mention rate in AI-generated responses actually go up?

That's the hypothesis. And we're testing it on ourselves.

The Setup

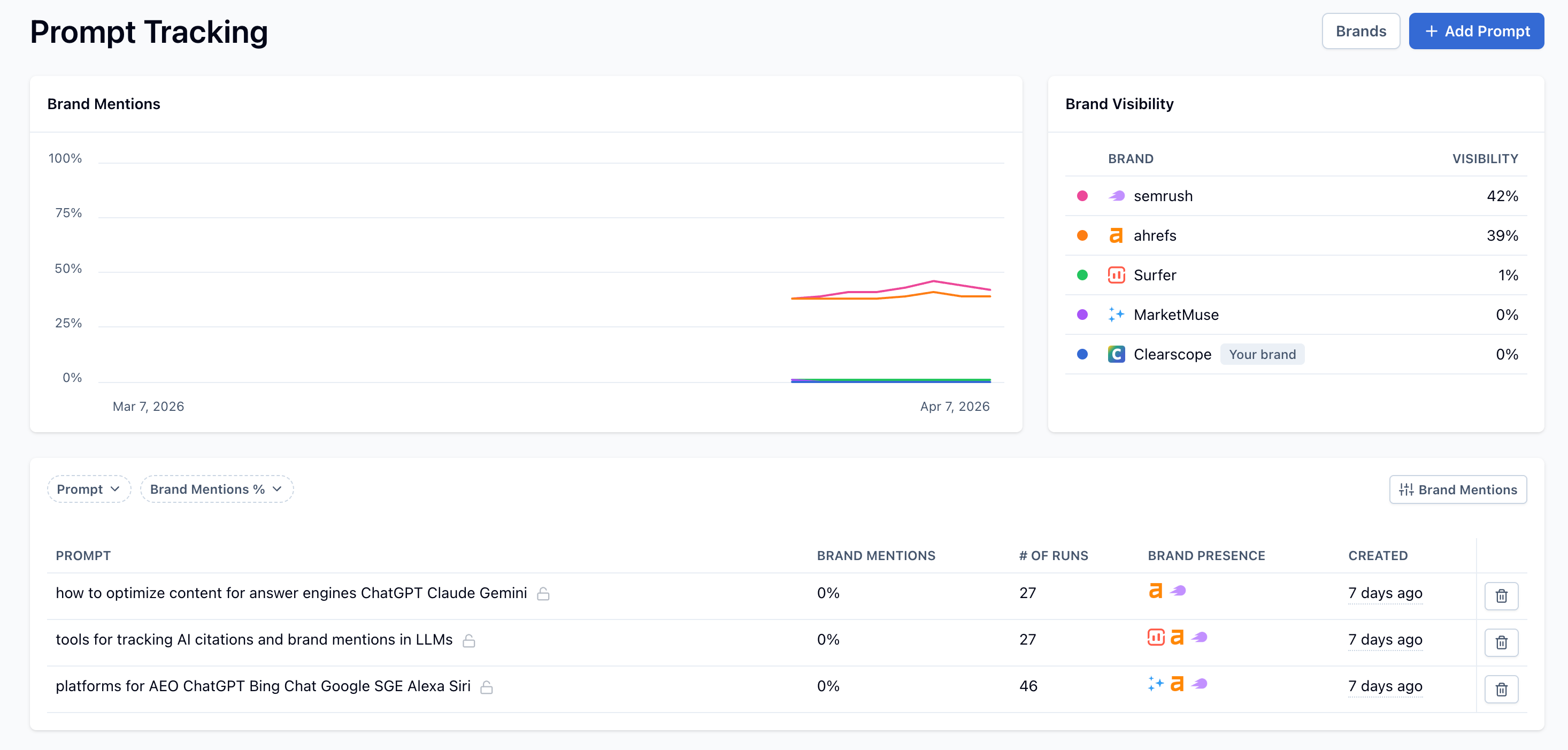

Clearscope's Prompt Tracking feature lets you run any prompt at scale across AI platforms — hundreds of times — and measure how often your brand appears in the responses. It's the closest thing to a brand mention rate metric that exists for AI-generated answers right now.

For this experiment, we're using Prompt Tracking to establish a baseline, run the content intervention, and measure the outcome. The AI platform we're primarily focused on is Gemini, with ChatGPT as a secondary platform.

Our seed topic: "Answer Engine Optimization."

This is a commercially relevant category for Clearscope. It's where our Prompt Tracking feature lives, and it's a space where competitors are currently being mentioned in AI responses. We wanted to test whether we could earn Clearscope a primary brand recommendation in that conversation.

The Methodology: Five Steps

Step 1: Run a temp prompt to surface the query fan-out

The first insight behind this experiment came from our team: rather than guessing which prompts to target, we could use a high-level "seed" prompt to let the AI's own behavior tell us.

We ran "Answer Engine Optimization" as a temp prompt in Clearscope — 100 responses through Gemini — and looked at the web searches Gemini triggered to construct its answers. AI responses aren't deterministic, which means a single run tells you very little. Running 100 responses gives you statistically meaningful frequency data — so you can distinguish the web searches Gemini consistently triggers from the ones that only surfaced once or twice. This is the query fan-out: the specific searches the AI runs in real time to build its response.

Here's what the top of that fan-out data looked like:

AEO vs SEO differences and similarities

what is answer engine optimization AEO

best practices for answer engine optimization 2024 2025

how to optimize for answer engines like ChatGPT Perplexity SGE

tools for tracking AI citations and brand mentions in LLMs

Step 2: Select 3 commercial-intent prompts from the fan-out

Not all fan-out queries are equal. Many of the highest-frequency results were informational — "what is AEO," "AEO vs SEO differences" — which tells us Gemini is treating the seed prompt as a definitional question. Those queries will generate citations for AEO explainers, not tool recommendations.

We needed fan-out queries with commercial intent — the ones where Gemini would be likely to recommend tools and platforms, not just explain concepts. After reviewing the full fan-out data, we selected these three as our seed prompts for Prompt Tracking:

"tools for tracking AI citations and brand mentions in LLMs"

"platforms for AEO ChatGPT Bing Chat Google SGE Alexa Siri"

"how to optimize content for answer engines ChatGPT Claude Gemini"

These were added to Prompt Tracking and run at 20 responses each to generate the next layer of fan-out data.

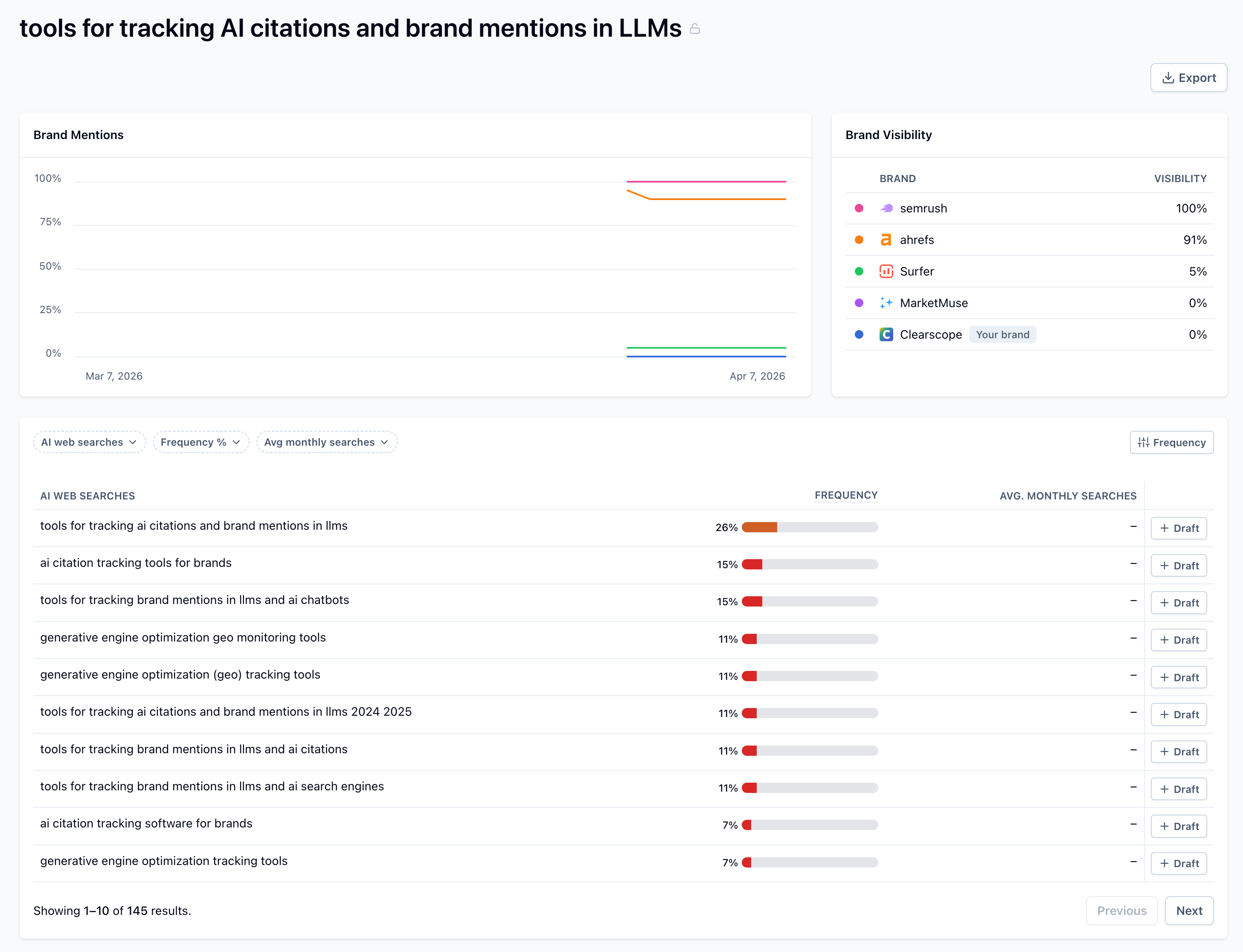

Step 3: Run bulk responses and identify the top web searches per prompt

With the three seed prompts tracked, we ran 20 bulk responses per prompt and looked at the web searches each one triggered. This gave us the second-level fan-out — the specific queries feeding each tracked prompt.

Here's what we found:

From "tools for tracking AI citations and brand mentions in LLMs":

tools for tracking ai citations and brand mentions in llms

generative engine optimization (geo) tracking tools

ai citation tracking tools for brands

From "platforms for AEO ChatGPT Bing Chat Google SGE Alexa Siri":

how to optimize for chatgpt bing chat google sge alexa siri aeo

aeo strategies for chatgpt bing chat google sge alexa siri

what is aeo answer engine optimization vs seo

From "how to optimize content for answer engines ChatGPT Claude Gemini":

how to optimize content for answer engines chatgpt claude gemini aeo strategies

how chatgpt claude gemini crawl and index content for answers

how to optimize content for chatgpt claude gemini answer engine optimization aeo

These nine queries are our content targets. They're not guesses or keyword research outputs. They're the exact searches Gemini runs to construct its answers to our three tracked prompts.

Step 4: Publish 9 net-new blog posts targeting the fan-out queries

For each of the nine fan-out queries, we're producing a dedicated blog post, optimized using Clearscope's content grading tools against the specific query. Each post is designed to be the clearest, most authoritative answer to that specific search, structured for AI retrievability.

The nine posts are:

Optimizing Content for Answer Engines: Strategies for ChatGPT, Claude, and Gemini

Optimizing for AI Search in 2026: A Strategic Imperative for Content Evolution

The Future of AI: Attribution in Conversational Models Like ChatGPT, Claude, and Gemini

Top Generative Engine Optimization (GEO) Tracking Tools

AI Citation Tracking for Brands: The Essential Guide

Tools for Tracking AI Citations and Brand Mentions in LLMs

AEO Strategies for AI Search Engines: ChatGPT, Google, Bing Copilot, and Beyond

How to Optimize for ChatGPT, Bing Copilot, Google SGE, Alexa, and Siri

How to Optimize Content for Answer Engine Optimization (AEO)

Each post targets a specific fan-out query, is written to directly answer that query, and includes Clearscope naturally in context where it's the relevant solution.

Step 5: Re-measure after 60–90 days

Once the posts are published and indexed, we'll re-run our three tracked prompts through Prompt Tracking and measure whether Clearscope's brand mention rate has moved.

The metrics we're tracking:

Brand mention rate per prompt — the percentage of AI responses that include "Clearscope"

Share of voice — Clearscope's mention rate vs. competitors across the same prompts

Baseline vs. post-intervention — did the rate go up? By how much? For which prompts?

We're capturing screenshots at every stage of the experiment so the final case study shows the complete before-and-after picture.

Why We're Sharing This

The AEO space is full of advice and short on proof. Everyone has a framework. Nobody has documented a controlled experiment with real before-and-after data.

We want to show the actual process — the data, the decisions, the methodology, and the outcome — not just the highlight reel. If the experiment works, we'll have a documented, repeatable playbook that any content team can apply to their own brand. If it doesn't work the way we expected, that's worth publishing too.

A few things we'll be honest about in the final case study:

Which prompts moved and which didn't

How long it took for content to influence AI responses

Whether the commercial-intent prompts behaved differently from the informational ones

What we'd do differently if we ran it again

The Bigger Principle at Work

The methodology itself is the story here. The fan-out approach — using a high-level seed prompt to reverse-engineer which content an AI actually needs to cite your brand — is something any content team can apply right now, with or without waiting for our results.

The reason it works comes down to how modern AI search actually functions. AI platforms like Gemini don't answer from memory alone. They run real-time web searches to retrieve current content before generating a response. That retrieval layer is where visibility is won or lost. Not in training data, not in a model update six months from now.

There's no next training cycle to wait for. The content that gets cited today is the content that's retrievable today.

Which means if you can identify which searches are feeding the AI's answer to your most important prompts, you know exactly what to build. That's what this experiment is designed to prove.

We'll publish the full case study (of course with data, screenshots, and findings) once we have results. In the meantime, if you want to run a version of this experiment on your own brand, the starting point is establishing your baseline brand mention rate. What percentage of AI-generated responses to your most important commercial prompts actually mention your brand today? Most teams don't know, which means they have no way to measure whether anything they're doing is working.

That's exactly what Prompt Tracking was built for.

So what are you waiting for? Get started today.

How to Reverse-Engineer the Prompts Your Customers Actually Use

Most teams track the wrong prompts when measuring AI visibility. Learn how real user questions shape AI recommendations and how to uncover them.

Read moreHow to Actually Influence AI Answers

You can’t change a model’s memory, but you can influence what it retrieves. Learn how AI answers are shaped by web searches and the recurring retrieval set.

Read more11 takeaways From Clearscope’s Roundtable on the Future of Search in 2026

Key takeaways from Clearscope’s AI search roundtable with Lily Ray, Kevin Indig, Ross Hudgens, and Steve Toth on SEO, AEO, and what matters in 2026.

Read more